You know who's using AI.

You don't know who's using it well.

Maestro shows you.

Your dashboards are broken

AI makes velocity metrics meaningless.

One engineer is mastering the craft of working with AI. The other is winging it. Your dashboards can't tell them apart.

The new craft of engineering

Well-run agent sessions produce excellent code. Poorly-run sessions produce AI slop.

The new blind spot

Which engineers are your AI leaders?

Some have already mastered the new craft.

Others are struggling with every session.

And some are still skeptics.

All of their sessions are invisible.

To you. And to each other.

No shared learning. No tribal knowledge. No visibility into what works. And what doesn't.

Maestro sees what dashboards don’t

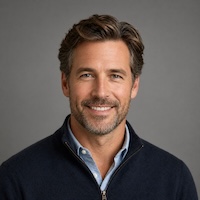

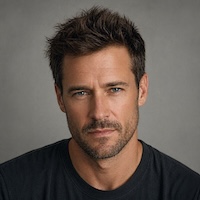

Two agent coding sessions.

Two outcomes.

Before you write code, list the security tradeoffs of PKCE vs. implicit flow for our mobile case.

Run the test against an expired refresh token — that's the case I'm worried about.

Just fix the token refresh bug.

Ok sure, go ahead.

How it works

Insights powered by a coding agent plugin.

Maestro installs as a plugin to Claude Code and Codex. It collects session data as engineers work. Deploy via your existing MDM in minutes.

Sessions linked to shipped PRs are analyzed across five dimensions of craft. Only work that reached a PR is evaluated — exploratory sessions stay private.

Engineers see their session review as a PR comment — same as a code review, visible to them first. Leaders see team-wide patterns on the Maestro dashboard.

The five dimensions of session craft

Not a vibe. A standard.

Five dimensions. One score per session. Calibrated against shipped outcomes.

Did the engineer stay scoped, or did the session drift?

HumanLayer's analysis of ~100k sessions found recall degrades when context fill exceeds 40%. Drifting sessions hit that threshold faster — and the agent stops following instructions reliably.

Did they reach the solution with minimal wasted motion?

GitClear's 2026 study of 2,172 developer-weeks found AI power users author 4–10× more durable code than average AI users. Efficiency, not volume, is the separator.

Did they control the session, or let the agent run loose?

DORA 2025: code-review time rose 91% and PR size rose 154% in high-AI teams. Scoped sessions — one task per thread — are what keep review from collapsing.

Did they verify the agent's output before calling it done?

Stanford/MIT (Mar 2026): 14.3% of AI-generated code contained vulnerabilities vs. 9.1% for human-written. Skipping verification is where that gap surfaces in production.

Did they give the agent enough context to succeed?

Practitioners from Anthropic to Cursor converge: a tight plan before editing prevents most mid-session corrections. Boris Cherny attributes "one-shot" implementations to plan quality, not model quality.

Team momentum, at a glance

See which teams are leveling up — and which need coaching.

| Team | Members | Effectiveness ▼ | Strongest | Weakest |

|---|---|---|---|---|

| Platform Engineering | 8 | 63-5 | Agent Focus+29 | Verification Rigor−21 |

| Payments Engineering | 6 | 56-15 | Agent Focus+12 | Prompt Quality−19 |

| Security Engineering | 5 | 51+2 | Session Management+5 | Verification Rigor−18 |

| Product Engineering | 12 | 45+6 | Agent Focus−3 | Verification Rigor−25 |

| Data & Analytics | 7 | 45-2 | Session Management±4 | Prompt Quality−23 |

- Coach Platform and Payments on Verification Rigor.

- Coach Payments and Data on Prompt Quality.

- Security has the right habits — it just needs better verification.

"My team was shipping more code than ever. That's not the same as shipping better code."

CEO, Series B Fintech